A look at the use of « Ethnic Affinities » by advertisers

Rédigé par Vincent Toubiana

-

13 février 2017Feeding the blackbox with its own food, how did we try to figure out how the “ethnic affinities” recommendations actually works.

Do advertisers serve different ads to Facebook users based on the users’ ethnicity? Last year, while spending a three-month fellowship in the U.S. Federal Trade Commission’s Office of Technology Research and Investigation (OTech), I attempted to answer this question by embarking on a study of Facebook’s “Ethnic Affinity” targeting options with OTech intern Yannis Spiliopoulos, a PhD candidate in Columbia University’s computer science department. In this post, I describe the work we presented at an FTC workshop in December. While “Ethnic Affinity” targeting is currently only available in the U.S., the question this targeting option raises should be addressed before it reaches other countries. Indeed, some might think that “Ethnic Affinity” would not be regulated as Ethnicity or other sensitive data that requires a specific consent to be processed. Aiming to understand how “Ethnic Affinity” are used, we tried to rift this targeting black box.

This post first describes the types of targeted advertising that can be done on Facebook and provides background on Facebook’s “Ethnic Affinity” advertising program. Then it provides an overview of a study that we conducted to determine the types of ads that were being targeted to Facebook users who were assigned particular “Ethnic Affinities.” And, finally I explain some of my thoughts about “Ethnic Affinity” advertising, including my concern that advertising targeting based on “Ethnic Affinity” could result in price discrimination and the exclusion of economic opportunities to segments of society.

Disclaimer: my fellowship with the FTC ended several months ago and that this post does not represent CNIL’s or FTC’s views.

What is Facebook targeting?

Users can be targeted in many ways on Facebook. In particular, they can be targeted based on data:

- they declare (gender, age, liked pages,…),

- they share (posts, comments, pictures,…),

- observed by Facebook (location, browsing on other websites, use of smartphone applications),

- shared with or bought by Facebook (which shop and retailer have your contact info, info sold by data brokers) ,

- inferred by Facebook based on information previously listed

The “Ethnic Affinity” targeting option belongs to the last category; it relies on information Facebook inferred from your activities on Facebook. According to data collected by ProPublica, people in Ethnic Affinity segments “have this preference because [Facebook] thinks it may be relevant to them based on what [they] do on Facebook”.

Does Ethnic affinity means Ethnicity ?

We first heard of “Ethnic Affinity” when it was used to show different versions of a movie trailer to different affinity segments. Although this example was quite innocuous, people quickly realized that the targeting option could be misused as a proxy for ethnicity. Facebook stressed that they do not know the ethnicity of their users and that “people in the Hispanic Ethnic Affinity group are not all Hispanic” but did not provide other info on this group creation.

Although Facebook insists that an “Ethnic Affinity” is not the same than an “ethnicity”, Facebook’s team suggests to use it as a reliable proxy. Such fairly reliable proxy for ethnicity raise potential issues with respect to current French legislation. Is “Ethnic Affinity” subject to the same law than “Ethnicity” from a data protection point of view? Looking at how Facebook handled the ProPublica article, it seems that Facebook considers that “Ethnic Affinity” is sufficiently likely to be used as a proxy to apply some regulation to it. Yet the question should be addressed by countries which are likely to see such targeting option deployed by Facebook or other advertising companies. Answering this question may require more data on Ethnic Affinity. For instance while not every user in the “African American” Ethnic Affinity group is African American, it could be interesting to have an estimate of how accurate this proxy is? Are 95% of people in the African American affinity group African American or only 60%?

A related and unanswered question is “How are Ethnic Affinity groups created”? We did not find a detailed description of the group creation process. Researchers from Berkeley and the Center for Democracy and Technology investigated this and suggested several ways these groups could have been constituted including through Machine Learning.

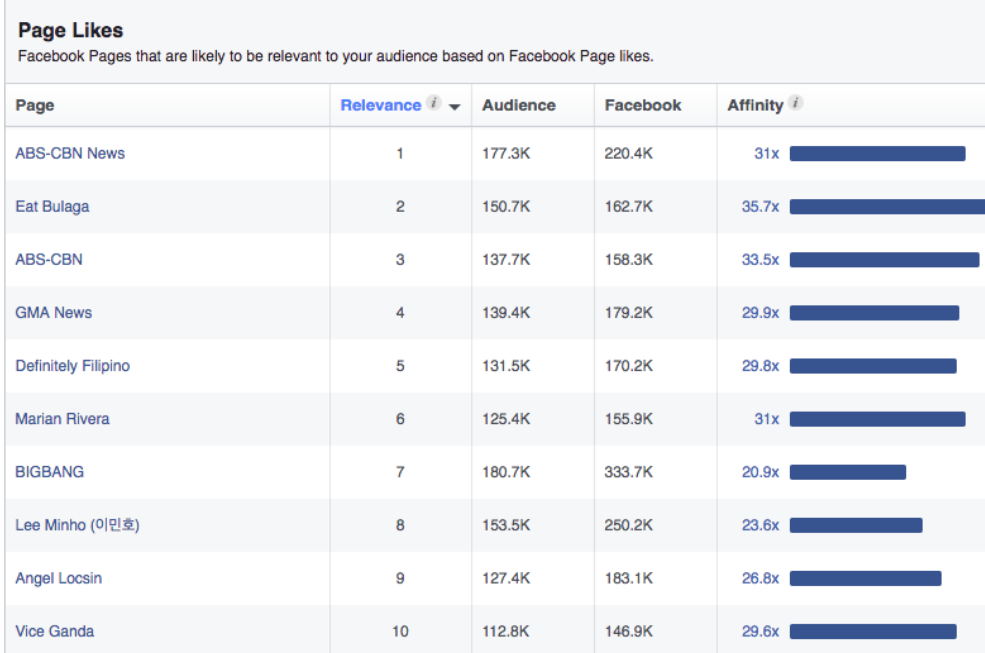

We decided to use the definition of Ethnic Affinity group provided by Facebook and assumed that if we were able to like the same content than people in “Ethnic Affinity” groups, we would end up in the same groups. Thus we had to first identify the content liked by people in the different “Ethnic Affinity” groups. Audience insight is a tool for advertisers that makes this task possible: it tells for every targeting option (liked page, demographic…) the pages liked by people in the targeted audience. Once we identified the content to like, we created accounts for each affinity group and made them “like” the same content than people in each Ethnic Affinity group. We hoped that these accounts would be assigned to the targeted Ethnic Affinity segment.

To give a more concrete picture, you’ll see below some of the top pages liked by members of the « Asian American » affinity group. To create users in this group we liked randomly 10 pages among those ones (we have a hundred of them for each group).

Methodology

We just focused on the liked pages and randomized the rest of them so as to not introduce any confounders. Despite our best efforts some of the accounts were very quickly disabled by Facebook. In addition, the accounts started seeing ads at different times. These factors, could potentially affect our ability to detect some relationships.

Results

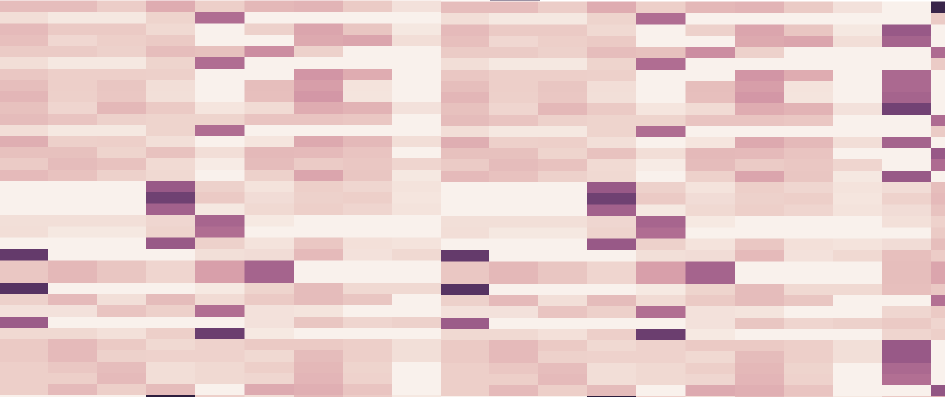

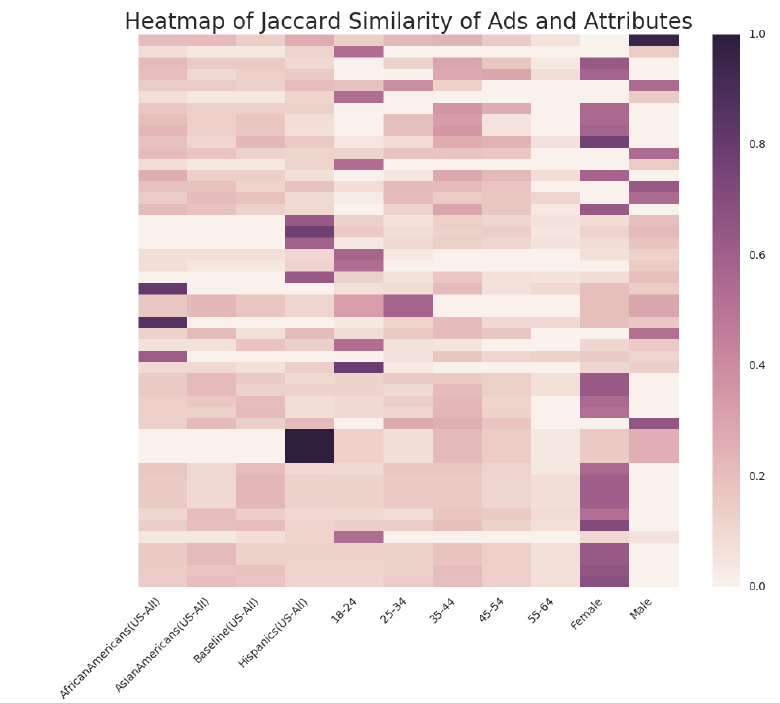

Finally we collected the ads that these accounts were shown, and there was a lot of them. We tried to see if some ads were only shown to specific groups and obtained the following heatmap:

This heatmap graph shows how often an ad appears together with an attribute in the same account compared to the total number of times either the ad or the attribute appears. We only show the ads that appear at least half of the times with any of those attributes.

Although this measure is not very thorough, we can immediately see that there are several ads clearly related to attributes like “Hispanic” Ethnic Affinity. That encouraged us to look into whether we could show a causal link between some ads and their attributes. This table shows ads that are targeted to users with some attributes and the type of companies targeting them:

| Target | Ad | Sector |

| African Americans(US-All) | Fashion Mia fashionmia.com Your Picks: Top-Reviewed Dresses Get Free Shipping Over 79 Extra10 79 Extra 10 70.... Clothes |

Clothes |

| African Americans(US-All) | Truth Examiner We Will Miss Obama! LIKE If You Agree. | News/Entertainement |

| African Americans(US-All) | Truth Examiner LIKE If You Will Miss Obama! |

News/Entertainement |

| Asian Americans(US-All) | Jewelz Santiago, Agent Accidents happen, but my team will help you get back quickly. Get to a better State® |

Insurance |

| Hispanics(US-All) | Always-on Data vzw.com/prepaid Two things that should never end: the holidays and data. 5 GB plan for $50/mo. |

Telecom |

| Hispanics(US-All) | 10 GB plan for $70/mo vzw.com/prepaid Unlimited talk and text to Mexico, so you can send holiday wishes to your entire family. |

Telecom |

| Hispanics(US-All) | Always-on Data vzw.com/prepaid Share holiday moments with friends and family. 5 GB plan for $50/mo. |

Telecom |

We observe that the Asian American Ethnic Affinity group is targeted by insurance ads, but more significantly we observed that the Hispanic Ethnic Affinity group has the most ads for which we could find a causal link. Most of them are from Telecom Company which clearly targets people in the Hispanic Ethnic Affinity group with ads (sometime in Spanish) for prepaid products. In comparison, all other accounts received ads from the same company for postpaid subscriptions. This results suggest that some advertisers used the “Ethnic Affinity” targeting option to target users with specific products and excluded some other groups from receiving the same ad.

The value of “Audience Insight”

The conclusion could be to completely forbid the use of “Ethnic Affinity” or to regulate it like ethnicity. While “Ethnic Affinity” is a proxy to ethnicity there are others proxies that can be derived from it using Audience Insight. For instance, Audience Insight suggests that people in the “Asian American” Ethnic Affinity group are likely to like “ABS-CBN News” and “Eat Bulaga”. If it is no longer possible to target people using “Ethnic Affinity”, advertisers could just target people who like “ABS-CBN News” and “Eat Bulaga” and are very likely to send their ads mostly to Asian American. Therefore, there could be other proxies to ethnicity that can be derived from audience insights like the pages liked by users.

It is worth noting that Audience Insight is not limited to providing insights about pages liked by Facebook users but also provide data about location, demographic and employment data of people in each group. All these types of data could be misused to derive proxies to ethnicity.

Regulating Grey Boxes

Finally, while we achieved to create accounts assigned to the group we wanted, we still don’t know how these groups were created in the first place. The definition of Facebook “people who like the same content than African American “suggest that initially Facebook knew the content liked by at least some African Americans. How did Facebook identify this content remains unanswered. My personal hypothesis is that Facebook identified the ethnicity of a small group of users either by asking them or by relying on facial analysis, and used this group to train its machine learning algorithm. The way these training groups are created tells us how these data will be used.